Video Inpainting by Jointly Learning Temporal Structure and Spatial Details

Chuan Wang1 Haibin Huang1 Xiaoguang Han2 Jue Wang1

1Megvii (Face++) USA 2Shenzhen Research Inst. of Big Data, CUHK (Shenzhen)

The 33th AAAI Conference on Artificial Intelligence (AAAI 2019)

arXiv https://arxiv.org/abs/1806.08482

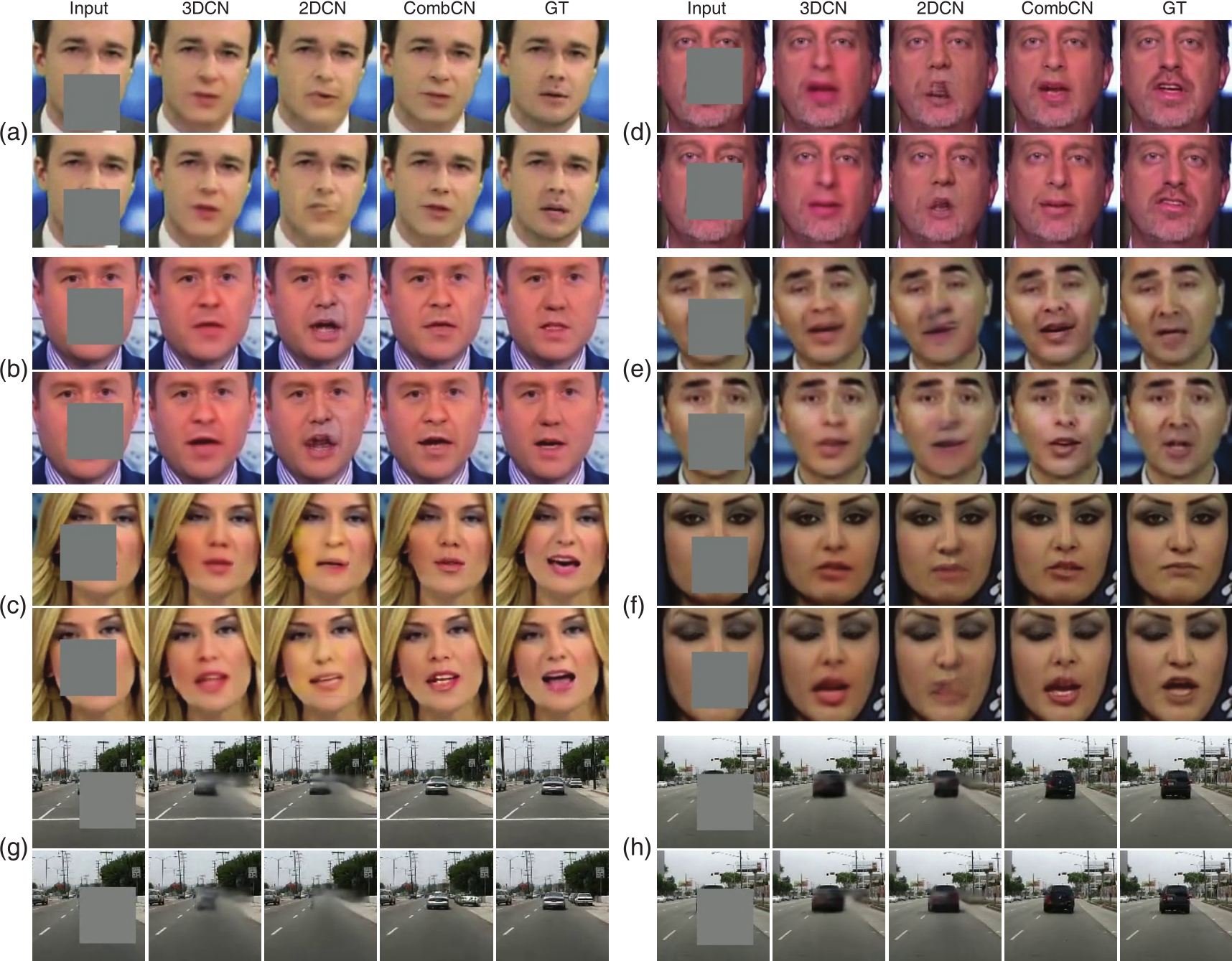

Figure: Inpainted frames on datasets FaceForensics (a ~ f) and Caltech (g, h). In each panel, the two rows represent two frames of a video, and the five columns from left to right are input, results by 3DCN, 2DCN and CombCN (ours), as well as the target ground truth.

Figure: Inpainted frames on datasets FaceForensics (a ~ f) and Caltech (g, h). In each panel, the two rows represent two frames of a video, and the five columns from left to right are input, results by 3DCN, 2DCN and CombCN (ours), as well as the target ground truth.

Abstract

We present a new data-driven video inpainting method for recovering missing regions of video frames. A novel deep learning architecture is proposed which contains two sub-networks: a temporal structure inference network and a spatial detail recovering network. The temporal structure inference network is built upon a 3D fully convolutional architecture: It only learns to complete a low-resolution video volume given the expensive computational cost of 3D convolution. The low resolution result provides temporal guidance to the spatial detail recovering network, which performs image-based inpainting with a 2D fully convolutional network to produce recovered video frames in their original resolution. Such two-step network design ensures both the spatial quality of each frame and the temporal coherence across frames. Our method jointly trains both sub-networks in an end-to-end manner. We provide qualitative and quantitative evaluation three datasets, demonstrating that our method outperforms previous learning-based video inpainting methods.

Downloads

|

|||||

|

|||||

|

|||||

|

|||||

|

Video Demo

Bibtex

@inproceedings{wang2018videoinp,

title={Video Inpainting by Jointly Learning Temporal Structure and Spatial Details},

author={Wang, Chuan and Huang, Haibin and Han, Xiaoguang and Wang, Jue},

booktitle={Proceedings of the 33th AAAI Conference on Artificial Intelligence},

year={2019}

}